An interesting article on slashdot posted in reference to water shortages and companies like Intel and Coke consuming large amounts of water that might otherwise be used for farming.

About 2.4 billion people live in “water-stressed†countries such as China, according to a 2009 report by the Pacific Institute, an Oakland, California-based nonprofit scientific research group

[..]

China’s 1.33 billion people each have 2,117 cubic meters of water available per year, compared with 1,614 cubic meters in India and as much as 9,943 cubic meters in the U.S., according to the Food and Agriculture Organization of the United Nations.

Nothing new really if you have been paying attention for the past few years. I really try very hard to keep this blog as technical as possible no matter how strong my emotions are to rant against the government and society in general, in this case I’ll venture a bit outside of the technical realm thanks to the above article mentioning Intel’s water intensive business.

Another water intensive business is data centers, perhaps one of the more extreme examples is the SuperNap outside Las Vegas, where one person is quoted as saying it will require millions of gallons of water per day:

“They’re in the middle of the desert and will need almost 3 million gallons of water per day for blowdown and evaporation for their 30,000 ton evaporative cooling plant.”

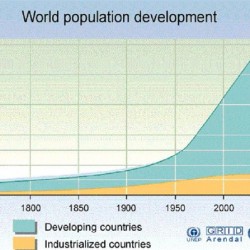

While I can’t vouch for the sources, just take a look at where some people think we are headed as far as global population growth is concerned, and notice similar trend lines from those that are in the global warming camp, and even more similar trend lines from those reporting on U.S. debt.

I’ll end the tangent here, but you can probably get an idea of where our civilization is headed.