(here is a link to in depth analysis on the issue)

Fortunately I didn’t notice any direct impact to anything I personally use. But I first got notification from one of the data center providers we use that they were having network problems they traced it down to memory errors and they frantically started planning for emergency memory upgrades across their facilities. My company does not and has never relied upon this data center for network connectivity so it never impacted us.

A short time later I noticed a new monitoring service that I am using sent out an outage email saying their service providers were having problems early this morning and they had migrated customers away from the affected data center(s).

Then I contacted one of the readers of my blog whom I met a few months ago and told him the story of my data center that is having this issue which sounded similar to a story he told me at the time about his data center provider. He replied with a link to this Reddit article which talks about how the internet routing table exceeded 512,000 routes for the first time today, and that is a hard limit in some older equipment which causes them to either fail, or to perform really slowly as some routes have to be processed in software instead of hardware.

I also came across this article (which I commented on) which mentions similar problems but no reference to BGP or routing tables (outside my comments at the bottom).

[..]as part of a widespread issue impacting major network providers including Comcast, AT&T, Time Warner and Verizon.

One of my co-workers said he was just poking around and could find no references to what has been going on today other than the aforementioned Reddit article. I too am surprised if so many providers are having issues that this hasn’t made more news.

(UPDATE – here is another article from zdnet)

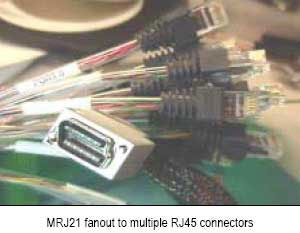

I looked at the BGP routing capacity of some core switches I had literally a decade ago and they could scale up to 1 million unique routes of BGP4 routes in hardware, and 2 million non unique (not quite sure what the difference is anything beyond static routing has never been my thing). I recall seeing routers again many years ago that could hold probably 10 times that (I think the main distinction between a switch and a router is the CPU and memory capacity ? at least for the bigger boxes with dozens to hundreds of ports?)

So it’s honestly puzzling to me how any service provider could be impacted by this today. How any equipment not capable of handling 512k routes is still in use in 2014 (I can understand for smaller orgs but not service providers). I suppose this also goes to show that there is wide spread lack of monitoring of these sorts of metrics. In the Reddit article there is mention of talks going on for months people knew this was coming — well apparently not everyone obviously.

Someone wasn’t watching the graphs.

I’m planning on writing a blog post on the aforementioned monitoring service I recently started using soon too, I’ve literally spent probably five thousand hours over the past 15 years doing custom monitoring stuff and this thing just makes me want to cry it’s so amazingly powerful and easy to use. In fact just yesterday I had someone email me about a MRTG document I wrote 12 years ago and how it’s still listed on the MRTG site even today (I asked the author to remove the link more than a year ago that was the last time someone asked me about it, that site has been offline for 10 years but is still available in the internet archive).

This post was just a quickie inspired by my co-worker who said he couldn’t find any info on this topic, so hey maybe I’m among the first to write about it.