[UPDATED – Some minor updates since my original post] I was at an Executive Briefing by HP, given by HP’s head of storage, and former 3PAR CEO David Scott. I suppose this is one good reason to be in the Bay Area – events like this didn’t really happen in Seattle.

I really didn’t know what to expect, I was looking forward to seeing David speak as I had not heard him before, his accent, oddly enough surprised me.

He covered a lot of various topics, it was clear of course he was more passionate about 3PAR than Lefthand, or Ibrix or XP or EVA, not surprising.

The meeting was not technical enough to get any of my previously mentioned questions answered, seemed very geared towards the PhB crowd.

HP Storage hits

3PAR

One word: Duh. The crown jewel of the HP storage strategy.

He emphasized over and over the 3PAR architecture and how it’s the platform powering 7 of the top 10 clouds out there, the design that lets them handle unpredictable workloads, you know this stuff by now, I don’t need to repeat it.

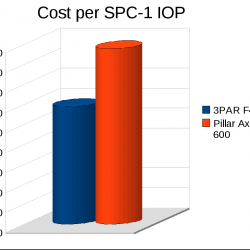

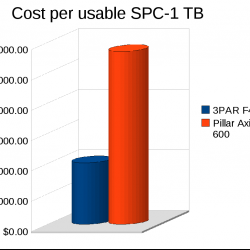

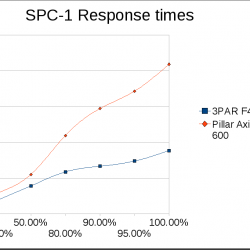

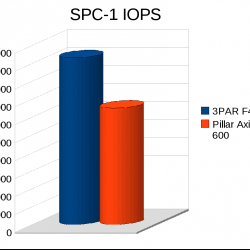

David pointed out an interesting tidbit with regards to their latest SPC-1 announcement, he compared the I/O performance and cost per usable TB of the V800 to a Texas Memory Systems all-flash array that was tested earlier this year.

The V800 outperformed the TMS system by 50,000 IOPS, and came in at a cost per usable TB of only about $13,000 vs $50,000/TB for the TMS box.

Cost per I/O, which he did not mention certainly favored the TMS system($1.05), but the comparison was still a good one I thought – we can give you the performance of flash and still give you a metric ton of disk space at the same time. Well if you want to get technical I guesstimate the fully loaded V800 weighs in at 13,160 pounds or about 6 metric tons.

Of course flash certainly has it’s use cases, if you don’t have a lot of data it doesn’t make sense to invest in 2,000 spinning rust buckets to get to 450,000 IOPS.

Peer Motion

Peer motion – both a hit and a miss, a hit because it’s a really neat technology, the ability to non disruptively migrate between storage systems without 3rd party appliances, the miss, well I’ll talk about that below.

He compared peer motion to the likes of Hitachi‘s USP/VSP, IBM‘s SVC, and EMC‘s VPLEX, which are all expensive, complicated bolt-on solutions. Seemed reasonable.

Lefthand VSA

It’s a good concept, and it’s nice to see it as a supported platform. David mentioned that Vmware themselves originally tried to acquire Lefthand (or wanted to acquire I don’t know if anything official was made) because they saw the value in the technology – and of course recently Vmware introduced something kinda-sorta-similar to the Lefthand VSA in vSphere 5. Though it seems not quite as flexible or as scalable.

I’m not sure I see much value in the P4000 appliances by contrast, I hear that doing RAID 5 or worse yet RAID 6 on P4000 is just asking for a whole lotta pain.

StoreOnce De-duplication

It sounds like it has a lot of promise, I’ll put it in the hit column for now as it’s still a young technology and it’ll take time to see where it goes. But the basic concept is a single de-duplication technology for all of your data. He contrasted this with EMC’s strategy for example where they have client side de-dupe with their software backup products, in line de-dupe with data domain, and primary storage dedupe — none of which are compatible with each other. Who knows, by the time HP gets it right with StoreOnce maybe EMC and others will get it right too.

I’m still not sold myself on the advantages of dedupe outside of things like backups, and things like VDI. I’ve sat through what seems like a dozen NetApp presentations on the topic so I have had the marketing shoved down my neck many times. I came to this realization a few years ago during an eval test of some data domain gear, I’ll be honest and admit I did not fully comprehend the technicals behind de-duplication at the time and I expected pretty good results from feeding it tens of gigabytes of uncompressed text data. But turns out I was wrong and realized why I was under an incorrect assumption to begin with.

Now data compression on the other hand is another animal entirely, being able to support in line data compression without suffering much or any I/O hit really would be nice to see (I am aware there are one/more vendors out there that offer this technology now).

HP Storage Misses

Nobody is perfect, HP and 3PAR are no exception no matter how much I may sing praises for them here.

Peer Motion

When I first heard about this technology being available on both the P4000 and 3PAR platforms I assumed that it was compatible with each other, meaning you could peer motion data to/from P4000 and 3PAR. One of my friends at 3PAR clarified this was not the case with me a few weeks ago and David Scott mentioned that again today.

He tried to justify it comparing it to vSphere vMotion where you can’t do a vMotion between a vSphere system and a Hyper-V system. He could of gone deeper and said you can’t do vMotion even between vSphere hosts if the CPUs are not compatible, would of been a better example.

So he said that most federation technologies are usually homogeneous in nature, and you should not expect to be able to peer motion from a HP P4000 to a HP 3PAR system.

Where HP’s argument kind of falls apart here is that the bolt on solutions he referred to as inferior previously do have the ability to migrate data between systems that are not the same. It may be ugly, it may be kludgey, but it can work. Hitachi even lists 3PAR F400, S400 and T800 as supported platforms behind the USP. IBM lists 3PAR and HP storage behind their SVC.

So, what I want from HP is the ability to do peer motion between at least all of their higher end storage platforms (I can understand if they never have peer motion on the P2000/MSA since it’s just a low end box). I’m not willing to accept any excuses, other than “sorry, we can’t do it because it’s too complicated”. Don’t tell me I shouldn’t expect to have it, I fully expect to have it.

Just another random thought but when I think of storage federation, and homogeneous I can’t help but think of this scene from Star trek VI

GORKON: I offer a toast. …The undiscovered country, …the future.

ALL: The undiscovered country.

SPOCK: Hamlet, act three, scene one.

GORKON: You have not experienced Shakespeare until you have read him in the original Klingon.

CHANG: (in Klingonese) ‘To be or not to be.’

KERLA: Captain Kirk, I thought Romulan ale was illegal.

KIRK: One of the advantages of being a thousand light years from Federation headquarters.

McCOY: To you, Chancellor Gorkon, one of the architects of our future.

ALL: Chancellor!

SCOTT: Perhaps we are looking at something of that future here.

CHANG: Tell me, Captain Kirk, would you be willing to give up Starfleet?

SPOCK: I believe the Captain feels that Starfleet’s mission has always been one of peace.

CHANG: Ah.

KIRK: Far be it for me to dispute my first officer. Starfleet has always been…

CHANG: Come now, Captain, there’s no need to mince words. In space, all warriors are cold warriors.

UHURA: Er. General, are you fond of …Shakes ….peare?

CHEKOV: We do believe all planets have a sovereign claim to inalienable human rights.

AZETBUR: Inalien… If only you could hear yourselves? ‘Human rights.’ Why the very name is racist. The Federation is no more than a ‘homo sapiens’ only club.

CHANG: Present company excepted, of course.

KERLA: In any case, we know where this is leading. The annihilation of our culture.

McCOY: That’s not true!

KERLA: No!

McCOY: No!

CHANG: ‘To be, or not to be!’, that is the question which preoccupies our people, Captain Kirk. …We need breathing room.

KIRK: Earth, Hitler, nineteen thirty-eight.

CHANG: I beg your pardon?

GORKON: Well, …I see we have a long way to go.

For the most basic workloads it’s not such a big deal if you have vSphere and storage vMotion (or some other similar technology). You cannot fully compare storage vMotion with peer motion but for offering the basic ability to move data live between different storage platforms it does (mostly) work.

HP Scale-out NAS (X9000)

I want this to be successful, I really do. Because I like to use 3PAR disks and well there just aren’t many NAS options out there these days that are compatible. I’m not a big fan of NetApp, I very reluctantly bought a V3160 cluster to try to replace an Exanet cluster on my last 3PAR box because well Exanet kicked the bucket(not the product we had installed but the company itself). I left the company not long after that, and barely a year later the company is already going to abandon NetApp and go with the X9000 (of all things!). Meanwhile their unsupported Exanet cluster keeps chugging along.

Back to X9000. It sounds like a halfway decent product they say the main thing they lacked was snapshot support and that is there now(or will be soon), kind of strange Ibrix has been around for how long and they did not have file system snapshots till now? I really have not heard much about Ibrix from anyone other than HP whom obviously sings the praises for the product.

I am still somewhat bitter for 3PAR not buying Exanet when they had the chance, Exanet is a far better technology than Ibrix. Exanet was sold for, if I remember right $12 million, a drop in the bucket. Exanet had deals on the table(at the time) that would of brought in more than $12 million in revenue (in each deal) alone. Multi petabyte deals. Here is the Exanet Architecture (and file system), as it stood in 2005, in my opinion, very similar to the 3PAR approach(completely distributed, clustered design – Exanet treats files like 3PAR treats chunklets), except Exanet did not have any special ASICs, everything was done in software. Exanet had more work to do on their product it was far from perfect but it had a pretty solid base to build upon.

So, given that I do hope X9000 does well, I mean my needs are not that great,. what I’d really like to see is a low end VSA for the X9000 along the lines of their P4000 iSCSI VSA. Just for simple file storage in an HA fashion. I don’t need to push 30 gigabits/second, just want something that is HA, has decent performance and is easy to manage.

Legacy storage systems (EVA especially)

Let it die already, HP has been adamant they will continue to support and develop the EVA platform for their legacy customers. That sort of boggles my mind. Why waste money on that dead end platform. Use the money to give discounted incentives to upgrade to 3PAR when the time comes. I can understand supporting existing installs, bug fix upgrades, but don’t waste money on bringing out whole new revisions of hardware and software to this dead end product. David said so himself – supporting the install base of EVA and XP is supporting their 11% market share, the other 89% of the market that they don’t have they are going to push 3PAR/Lefthand/Ibrix.

I would find a way to craft a message to that 11% install base, subliminal messaging (ala Max Headroom, not sure why that came to my head) make them want to upgrade to a 3PAR box, not the next EVA system.

XP/P9500 I can kinda sorta see keeping around, I mean there are some things it is good at that even 3PAR can’t do today. But the market for such things is really tiny, and shrinking all the time. Maybe HP doesn’t put much effort into this platform because it is OEM’d from Hitachi, in which case it doesn’t cost a lot to re-sell, in which case it doesn’t make a big difference if they keep selling it or stopped selling it. I don’t know.

I can just see what goes through a typical 3PAR SE’s mind (at least those that were present before HP acquired 3PAR) when they are faced with selling an EVA. If the deal closes perhaps they scream NOooooooooooooooooooo like Darth Vader in Return of the Jedi. Sure they’d rather have the customer buy HP then go buy a Clariion or something. But throw these guys a bone. Kill EVA and use the money to discount 3PAR more in the marketplace.

P2000/MSA – gotta keep that stuff, there will probably always be some market for DAS

Insights

I had the opportunity to ask some high level questions of David and got some interesting responses, he seems like a cool guy

3PAR Competition

David harped a lot on how other storage architectures from the big manufacturers were designed 15-20 years ago. I asked him – why does he think – given 3PAR technology is 10+ years old at this point that these other manufacturers haven’t copied it to some degree? It has obvious technological advantages it just baffles me why others haven’t copied it.

His answer came down to a couple of things. The main point was 3PAR was basically just lucky. They were in the right place, at the right time, with the right product. They successfully navigated the tech recession when at least two other utility storage startups could not and folded (I forgot their names, I’m terrible with names). He said the big companies pulled back on R&D spending as a result of the recession and as such didn’t innovate as much in this area, which left a window of opportunity for 3PAR.

He also mentioned two other companies that were founded at about the same time to address the same market – utility computing. He mentioned Vmware as one of them, the other was the inventor of the first blade system, forgot the name. Vmware I think I have to dispute though. I seem to recall Vmware “stumbling” into the server market on accident rather than targeting it directly. I mean I remember using Vmware before it was even Vmware workstation or GSX. It was just a program used to run another OS on top of Linux (that was the only OS Vmware ran on at the time). I recall reading that the whole server virtualization movement came way later and caught Vmware off guard. as much as it caught everyone else off guard.

He also gave an example in EMC and their VMAX product line. He said that EMC mis understood what the 3PAR architecture was about – in that they thought it was just a basic cluster design, so EMC re-worked their system to be a cluster – the result is VMAX. But it still falls short in several design aspects, EMC wasn’t paying attention.

I was kind of underwhelmed when the VMAX was announced, I mean sure it is big, and bad, and expensive, but they didn’t seem to do anything really revolutionary in it. Same goes for the Hitachi VSP. I fully expected both to do at least some sort of sub disk distributed RAID. But they didn’t.

Utilizing flash as a real time cache

David harped a lot on 3PAR’s ability to be able to respond to unpredictable workloads. This is true, I’ve seen it time and time again, it’s one reason why I really don’t want to use any other storage platform at this point in time given the opportunity.

Something I thought really was innovative that came out of EMC in the past year or two is their Flash Cache product (I think that’s the right name), the ability to use high speed flash as both a read and a write cache. The ability to bulk the cache levels up into the multiples of terabytes for big cloud operations.

His response was – we already do that – with RAM cache. I clarified a bit more in saying scaling out the cache even more with flash well beyond what you can do with RAM. He kind of ducked the question saying it was a bit too technical/architectural for the crowd in the room. 3PAR needs to have this technology. My key point to him is the 3PAR tools like Adaptive Optimization and Dynamic Optimization are great tools – but they are not real time. I want something that is real time. It seemed he acknowledged that point – the lack of the real time nature of the existing technologies as a weak point – hopefully HP/3PAR addresses it soon in some form.

In my previous post, Gabriel commented on how the new next gen IBM XIV will be able to have up to 7.5TB of read cache via SSD. I know NetApp can have a couple TB worth of read cache in their higher end boxes. As far as I know only EMC has the technology to do both read and write. I can’t say how well it works, since I’ve never used it and know nobody that has this EMC gear, but it is a good technology to have, especially as flash matures more.

I just think how neat it would be to have, say a 1.5-2PB system running SATA disks with an extra 100TB(2.5-5% of total storage) of flash cache on top of it.

Bringing storage intelligence to the application layer

Another question I asked him was his thoughts around a broader industry trend which seems to be trying to bring the intelligence of storage out of the storage system and put it higher up in the stack – given the advanced functionality of a 3PAR system are they threatened at all by this? The examples I gave were Exchange and Oracle ASM.

He focused on Oracle, mentioning that Oracle was one of the original investors in 3PAR and as a result there was a lot of close collaboration between the two companies, including the development of the ASM technology itself.

He mentioned one of the VPs of Oracle, I forget if he was a key ASM architect or developer or something, but someone high up in the storage strategy involving ASM — in the early days this guy was very gung ho, absolutely convinced that running the world on DAS with ASM was the way to go. Don’t waste your money on enterprise storage, we can do it higher in the stack and you can use the cheap storage, save yourself a lot of money.

David said once this Oracle guy saw 3PAR storage powering an Oracle system he changed his mind, he no longer believed that DAS was the future.

The key point David was trying to make was – bringing storage intelligence higher up in the stack is OK if your underlying storage sucks. But if you have a good storage system, you can’t really match that functionality/performance/etc that high up in the stack and it’s not worth considering.

Whether he is right or not is another question, for me I think it depends on the situation, but any chance I get I will of course lean towards 3PAR for my back end disk needs rather than use DAS.

In short – he does not feel threatened at all by this “trend”. Though if HP is unwilling or unable to get peer motion working between their products when things like Storage vMotion and Oracle ASM can do this higher up in the stack, there certainly is a case for storage intelligence at the application layer.

Best of Breed

David also seemed to harp a lot about best of breed. He knocked his competitors for having a mis mash of technologies, specifically he mentioned market leading technologies instead of best of breed. Early in his presentation he touted HP’s market leading position in servers, and their #2 position in networking (you could say that is market leading).

He also tried to justify that the HP integrated cloud stack is comprised of best of breed technologies, it just happens to be two out of the three are considered market leading, no coincidence there.

Best of breed is really a perception issue when you get down to it. Where do you assign value in the technology. Do you just want the cheapest you can get? Do you want the most advanced software? Do you want the fastest system? Do you want the most reliable? Ease of use? interoperable ? flexibility? buzz word compliant? Big name brand?

Because of that, many believe these vertically integrated stacks won’t go very far. There will be some customer wins of course, but those will more often then not be wins based on technology but based on other factors, political (most likely), financial (buy from us and we’ll finance it all no questions asked), or maybe just the warm and fuzzy feeling incompetent CIOs get when they buy from a big name that says they will stand behind the products.

I did ask David what is HP’s stance on a more open design for this “cloud” thing. Not building a cloud based on a vertically integrated stack. His response was sort of what I expected – none of the other stack vendors are open, we aren’t either so we don’t view it as an important point.

I was kind of sad that he never used the 3cV term, really, I think was likely the first stack that was out there, and it wasn’t even official, there was no big marketing or sales push behind it.

For me, my best of breed storage is 3PAR, it may have 1 or 2% market share (more now), so it surely is not market leading(might make it there with HP behind it), but for my needs it’s best of breed.

Switching, likewise, Extreme – maybe 1 – 1.5% market share, not market leading either, but for me, best of breed.

Fibre Channel – I like Qlogic. Probably not best of breed, certainly not market leading at least for switches, but damn easy to use and it gets the job done for me. Ironically enough while digging up links for this post I came across this, which is an article suggesting Qlogic should buy Extreme, back in 2009. I somewhat fear, the most likely company to buy Extreme at this point is Oracle. I hope Oracle does not buy them, but Oracle is trying to play the whole stack game too and they don’t really have any in house networking, unlike the other players. Maybe Oracle will jump on someone like Arista instead, be a cheaper price, ala Pillar.

Servers – I do like HP best of course for the enterprise space – they don’t compete as well in scale out though.

Vmware on the other hand happens to be in a somewhat unique position being both the market leader and for the most part best of breed, though others are rapidly closing the gap in the breed area, Vmware had many years with no competition.

Summary

All in all it was pretty good, a lot more formal than I was expecting, I saw 3 people I knew from 3PAR, I sort of expected to see more (I fully was expecting to see Marc Farley there! where were you Marc!).

David did harp on quite a bit using Intel processors, something that Chuck from EMC likes to harp on too. I did not ask this question of David, because I think I know the answer. The question would be does he think HP will migrate to Intel CPUs, and away from their purpose built ASIC? I think the answer to that question is no, at least not in the next few years(barring some revolution in general purpose processors). With 3PAR’s distributed design I’m just not sure how a general purpose CPU could handle calculating the parity for what could be as many as say half a million RAID arrays on a storage system like the V800 without the assistance of an ASIC or FPGA. I really do not like HP’s pushing of the Intel brand so much being a partner of HP and all, at least with regards to 3PAR. Because really – the Intel CPU does not do much at all in the 3PAR boxes, it never has.

Just look at IBM XIV – they do distributed RAID, though they do mirroring only, and they top out at 180 disks, even with 60 2.4Ghz Intel CPU cores (120 threads), with a combined 180MB of CPU cache. Mirroring is a fairly simple task vs doing parity calculations.

Frankly, I’d rather see an AMD processor in there, especially one with 12-16 cores. The chipsets that power the higher end Intel processors are fairly costly, vs AMD’s chipset scales down to 1 CPU socket without an issue. I look at a storage system that has dual or quad core CPUs in it and I think what a waste. Things may be different if the storage manufacturers included the latest & greatest Intel 8 and 10 core processors but thus-far I have not seen anything close to that.

David also mentioned a road VMware is traveling, moving away from file systems to support VMs to a 1:1 relationship between LUNs and VMs, making life a lot more granular. He postulated (there’s a word I’ve never used before) that this technology(forgot the name) will make protocols like NFS obsolete(at least when it comes to hosting VMs), which was a really interesting concept to me.

At the end of the day, for the types of storage systems I have managed myself, for the types of companies I have worked for I don’t get enough bang for the buck out of many of the more advanced software technologies on these storage systems. Whether it is simple replication stuff, or space reclamation, I’m still not sold on Adaptive optimization or other automatic storage tiering techniques, even application aware snapshots. Sure these are all nice features to have, but they are low on my priority list, I have other things I want to buy first before I invest in these things, myself at least. If money is no object – sure, load up on everything you got! I feel the same way about VMware and their software value add strategy. Give me the basics and let me go from there. Basics being a solid underlying system that is high performance, predictable and easy to manage.

There was a lot of talk about cloud and their integrated stacks and stuff like that but that was about as interesting to me as sitting through a NetApp presentation. At least with most of the NetApp presentations I sat through I got some fancy steak to go with it, just some snacks at this HP event.

One more question I have for 3PAR – what the hell is your service processor running that requires 317W of power! Is it using Intel technology circa 2004 ?

This actually ended up being a lot longer than I had originally anticipated, nearly 4200 words!